A codebot works by reading a model of the application including the entities, attributes and relationships. Through this artefact, the bots can write upwards of 90% of the code base. Here Eban explains.

We know that Codebots adds value to a software project. But the question that remains is one of quantification. Exactly how much value does a codebot add?

To test this we created an experimental framework. We couldn’t simply hypothesise how long our past projects would have taken without a codebot. This would lead to internal bias. Instead, we created the Codebots field trials. The purpose of the field trials was to with prove or disprove our hypothesis:

“We predict a team with a codebot will provide more value to a software development project than a team without a codebot in the same amount of time”

We invited Queensland enterprises with internal development resources to participate in a one week trial. During that week, we assigned one project team (web developer, UX designer, tools engineer and a codebot) to develop or work on their top priority. At the end of the week, we presented what we were able to build and asked participants a range of questions. In exchange, they retained all the source code created during the week and the applications remained on a beta environment.

There were six participants in round 1.

Results

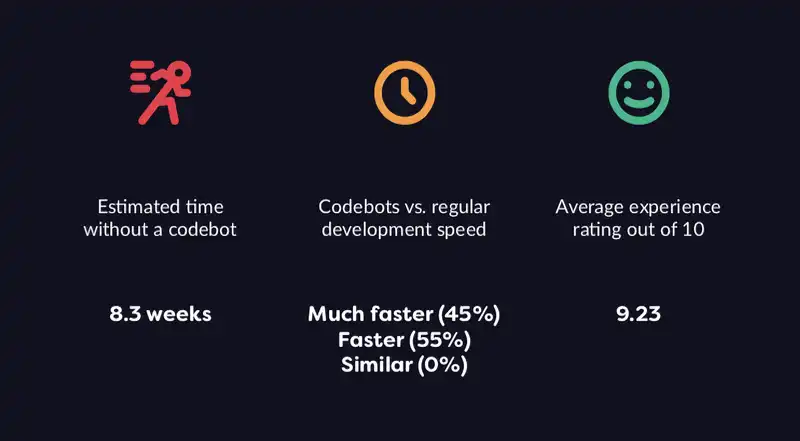

Over the six weeks of field trials the bots were able to write 143,453 lines of code (93%) with our human developers contributing 10,864 lines (7%). This meant one of two things; either our human developers need 50x more coffee or our bots are incredibly valuable members of the team. Participants were also asked how long they believe the project would have taken using traditional software development techniques, the speed vs regular development and what their experience in the field trials was like. Those results are provided in the image below.